8 Best Ways To Collect Employee Feedback In The Workplace

Employees are navigating a world that has made it genuinely difficult to know what to trust, and that skepticism does not stay outside the office door. Gallup’s 2025 State of the Global Workplace report found that only 21% of employees are engaged at work, the lowest figure recorded since COVID, and the organizations feeling that most acutely are the ones that never built a reliable way to hear from their people.

Most organizations already have some way to collect employee feedback. An annual engagement survey, maybe a suggestion box, possibly a post-town-hall form that gets sent out and mostly ignored. The problem is that employees have learned, often through experience, that sharing honest feedback leads to nothing. A survey goes out, responses come in, and then silence. Over time, participation drops, answers get safer, and the data becomes less useful precisely because people stopped trusting the process.

This guide covers the best ways to collect employee feedback across every channel and audience type, including how to collect anonymous employee feedback when trust is low, how to reach frontline teams with real-time employee feedback tools, and how to build a repeatable employee feedback loop that people actually participate in because they can see it leading somewhere.

Why Is Collecting Employee Feedback Important?

Employee feedback is the structured process of gathering employees’ perspectives on their work experience, management, communication, and organizational decisions; then acting on what is heard.

Understanding why employee feedback is important starts with a simple reality: the people doing the work every day have information that leadership doesn’t. They know which processes are broken, which tools are slowing them down, which managers are creating friction, and what would actually make their jobs better. Without a reliable way to surface that information, organizations are making decisions with incomplete data.

And the cost of getting that wrong is not abstract. The World Economic Forum named misinformation and disinformation the number one global risk for two years running, and employees are living inside that reality every day before they show up to work. They are arriving already primed to question what they are told, which means the organizations that create genuine opportunities to be heard are the ones best positioned to build the kind of internal trust that the outside world is doing very little to support.

But the importance of employee feedback goes beyond gathering information. Here’s what it directly affects:

- Retention: Employees who feel heard are significantly more likely to stay. In 2026, with hiring costs still high and talent markets remaining competitive in most industries, voluntary turnover is one of the most expensive problems an organization can have. Feedback channels signal that the organization values input, which builds the kind of trust that keeps people from quietly job searching.

- Engagement: Asking for feedback, and visibly acting on it, is one of the most direct drivers of engagement. It tells employees their experience matters and that leadership is paying attention, especially important as hybrid and distributed work continues to make employees feel more disconnected from organizational decisions.

- Performance: Teams that give and receive feedback regularly make faster course corrections. Problems get identified earlier, before they compound into larger organizational issues.

- Culture: A workplace where honest feedback is welcomed and acted on is built through consistent, structured feedback practices over time. Without that structure, most employees default to sharing frustrations with colleagues rather than with the people who can actually do something about them.

- Decision making: Whether it’s a new benefits rollout, a return-to-office policy, or a change in internal tools, decisions made with employee input have higher adoption rates and fewer unintended consequences.

The Employee Feedback Loop (The Only Process That Scales)

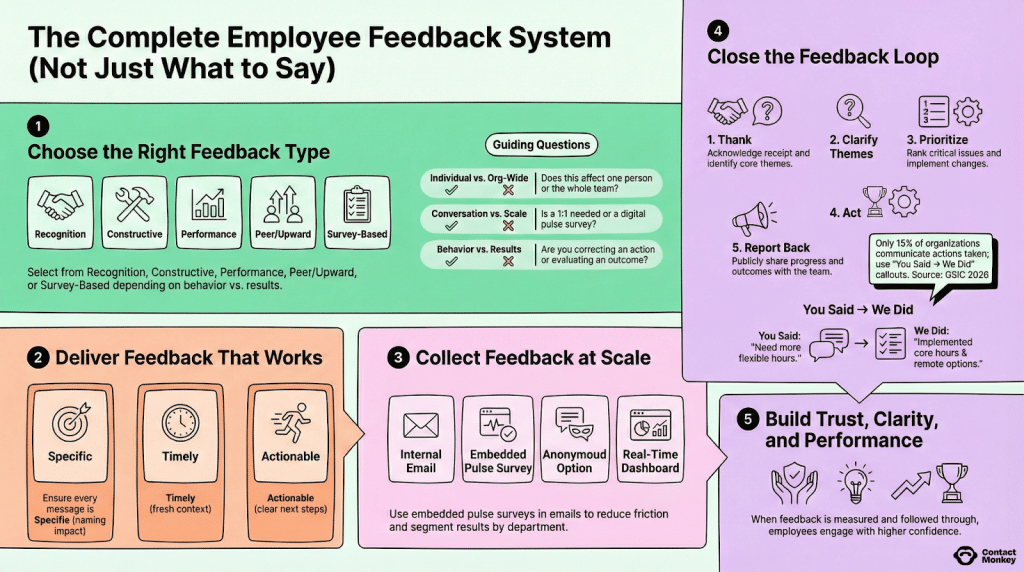

A strong employee feedback loop functions as a five-step system that connects asking to acting, and acting to communicating, in a way that is visible enough to rebuild participation over time. Here is how each step works in practice:

1. Start with the right feedback type for the situation

Before sending anything, the most important question to answer is what kind of employee feedback you actually need. Each type serves a different purpose and requires a different approach:

- Recognition feedback acknowledges specific behaviours or contributions and works best delivered in the moment, not weeks later in a formal review.

- Constructive feedback addresses a specific behaviour or outcome and needs to be tied to a concrete example to be useful.

- Performance feedback evaluates results against expectations and belongs in a structured, scheduled setting with clear criteria.

- Peer and upward feedback surfaces perspectives that a manager alone cannot see, and requires anonymity protections to be honest.

- Survey-based feedback measures sentiment, clarity, or engagement at scale and is the right tool for org-wide questions, not individual ones.

Defaulting to the same format for every situation is one of the most common reasons feedback programs produce data that nobody knows how to act on. Before sending anything, ask whether this affects one person or the whole team, whether a conversation or a digital survey is more appropriate, and whether you are trying to correct a behaviour or evaluate a result. Those three questions will point you to the right type every time.

For examples of each feedback type and guidance on when to use them, read 20 Employee Feedback Examples and When to Use Them.

2. Make every piece of feedback specific, timely, and actionable

Whether you are asking employees to give feedback or sharing results back with the organization, the same three principles apply. It needs to be specific enough that the recipient knows exactly what is being referenced, timely enough that the context is still fresh, and actionable enough that there is a clear next step attached to it. Vague feedback, in either direction, creates confusion and erodes trust in the process. Every message, every survey question, and every results summary should be able to pass a simple test: does this tell someone something specific enough to act on?

3. Build a feedback system that works across every type of employee

Participation rates are almost entirely a function of trust and effort. If employees do not believe their responses are confidential, they will not be honest. If the survey takes more than five minutes, a meaningful portion of your audience will abandon it before finishing. The most effective employee feedback collection setups combine multiple channels: internal email with embedded pulse surveys to reduce friction, anonymous employee feedback options, and real-time dashboards that segment results by department so patterns are visible immediately.

4. Close the feedback loop with clear, visible next steps

This is where most employee feedback processes break down. Results get shared with a leadership team, everyone agrees something should change, and then nothing gets assigned to anyone. For every priority that comes out of a feedback cycle, there needs to be a named owner, a specific action, and a date by which that action will be visible to employees. Start by acknowledging and identifying the core themes. Then clarify and prioritize the issues that are both high-impact and within your control to address. Then act, with real deadlines, not standing agenda items.

5. Report back to employees with a clear “you said, we did” update

This is the step that almost no organization does consistently, and it is the single highest-leverage action available to internal communications teams. According to ContactMonkey’s Global State of Internal Communications (GSIC) 2026 Report, only 15% of organizations consistently communicate the actions taken after a feedback cycle. When employees see a direct, specific connection between the feedback they gave and a change that was made, participation in the next survey goes up. When they do not see that connection, it goes down, and it takes several cycles to recover. A short internal email or a section in your next employee newsletter that publicly shares progress and outcomes is enough. The format matters far less than the consistency. Continuous employee feedback only becomes continuous when employees believe the loop is actually closing.

8 Best Ways to Collect Employee Feedback

There is no single employee feedback collection method that works for every audience, every question, or every moment. The organizations that get the most useful data are the ones that match the method to the moment rather than defaulting to the same approach every time. This guide covers eight feedback methods in full. Jump to whichever one you want to explore first:

- Pulse surveys

- Anonymous feedback channels

- Real-time and frontline feedback

- SMS and text message feedback

- Focus groups and listening sessions

- Manager conversations and skip-levels

- 360 degree feedback

- Always-on digital feedback channels

1. Pulse surveys for fast, structured, measurable feedback

Pulse surveys are short, focused employee feedback surveys sent on a regular cadence, typically weekly, biweekly, or monthly, covering a specific theme or set of engagement drivers. Unlike annual surveys that try to measure everything at once, pulse surveys are designed to track movement over time. A single pulse result tells you where things stand. A series of them tells you whether things are getting better or worse, and how quickly.

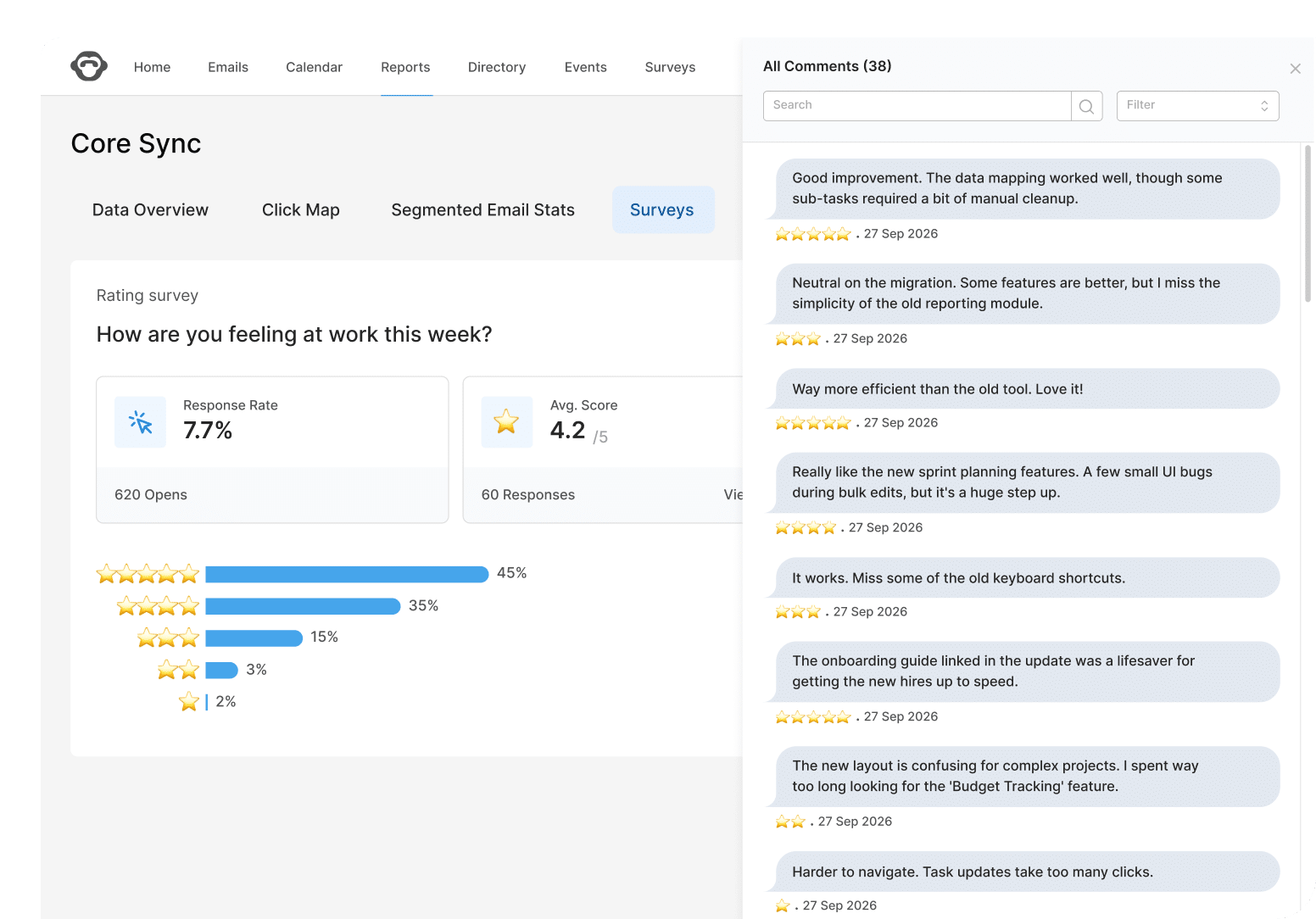

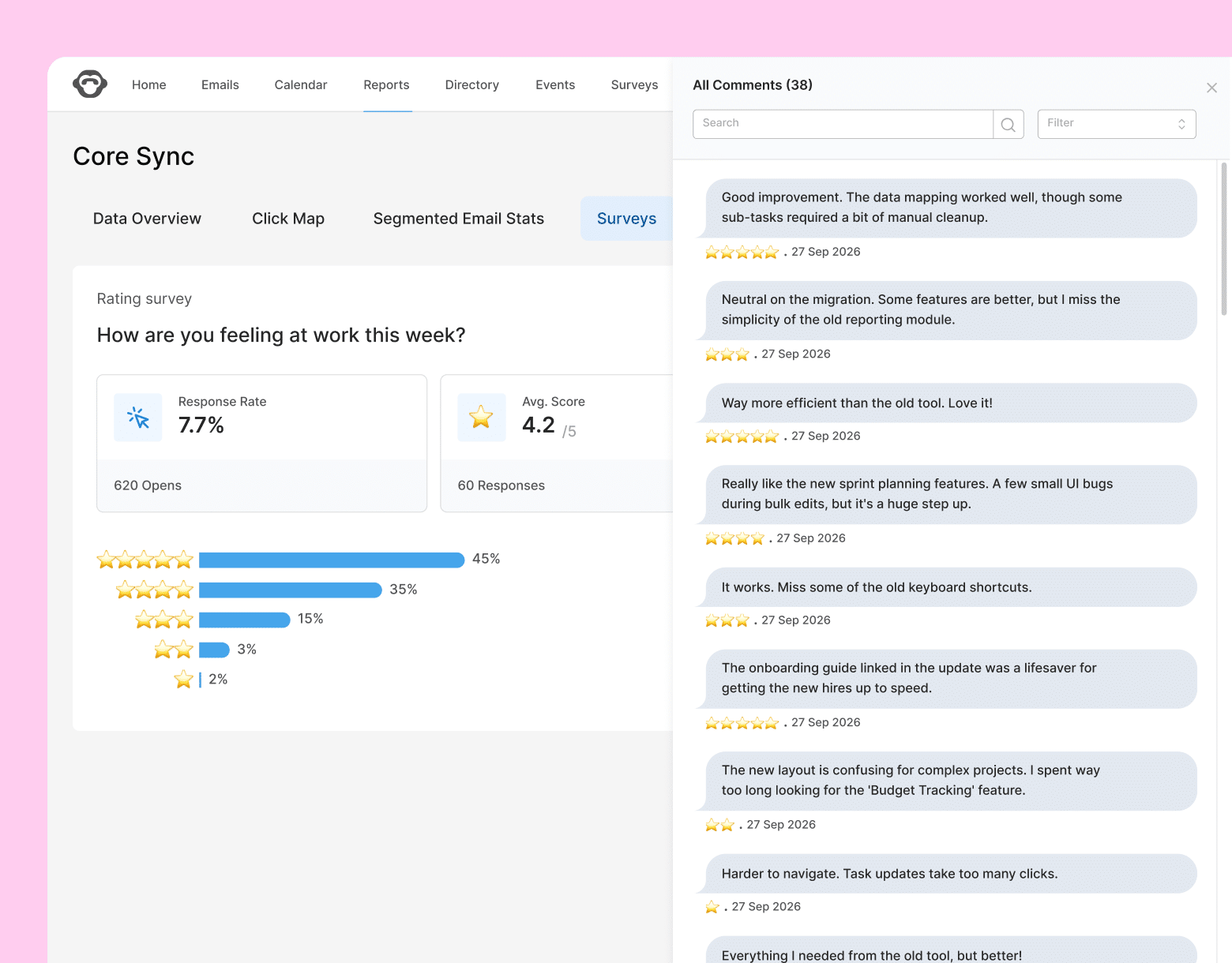

For internal communications teams specifically, pulse surveys are one of the most reliable ways to measure whether your communications are actually landing. You can track whether employees understand a new policy after it was announced, whether confidence in leadership is shifting during a restructure, or whether a change initiative is being adopted at the pace leadership expects. According to GSIC 2026, 53% of organizations already rely on short pulse surveys as part of their feedback mix, second only to employee engagement surveys, which signals how quickly they have become a standard tool for teams that need faster, more actionable data than an annual survey can provide.

What makes a pulse survey effective for internal communicators?

The most common mistake with pulse surveys is treating them like a shortened annual engagement survey, which turns a focused listening tool into something that tries to do too much at once. Each pulse should be built around a single theme, use a consistent rating scale so responses are comparable across cycles, and include at least one open-text question to capture the context and nuance that rating questions will always miss.

Sending cadence matters just as much as question design. A biweekly pulse sent on the same day and time each cycle trains employees to expect it, which gradually turns responding into a habit rather than an interruption. Rotating themes across cycles, rather than asking the same five questions every time, keeps responses honest and prevents employees from pattern-matching their answers based on what they think you want to hear.

For teams managing communications across multiple departments or locations, embedding the survey directly inside the internal email rather than linking out to a separate platform makes a measurable difference in completion rates. When an employee can answer in a single click without leaving their inbox, the participation gap between desk-based and frontline workers narrows significantly. ContactMonkey lets internal communications teams do exactly that, with results feeding into a real-time dashboard segmented by department, location, or team so you can see where sentiment is shifting before it shows up as a retention or engagement problem.

Putting it into practice: a leadership trust pulse survey

Leadership trust is one of the most important and most undertracked engagement drivers in most organizations. Here is what a leadership-focused pulse looks like in practice.

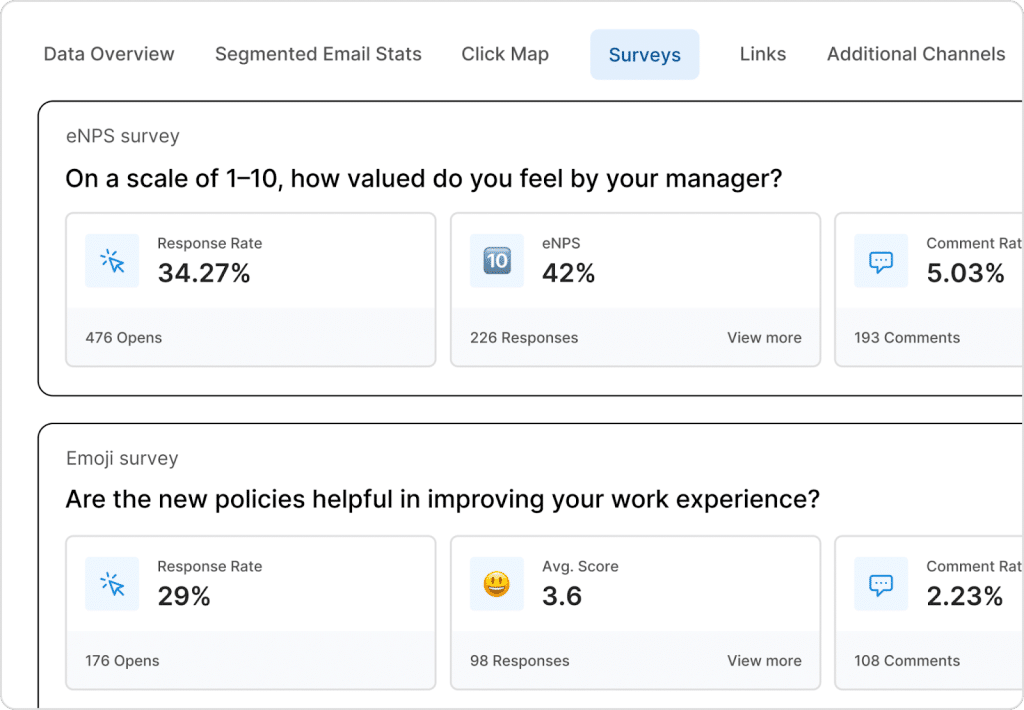

An eNPS-style question such as “On a scale of 1 to 10, how valued do you feel by your manager?” gives you a trackable score that can be compared across teams and over time. Pair it with an emoji survey question like “Are the communications from leadership helping you feel informed and confident in your role?” to capture sentiment more intuitively, particularly for employees who find numeric scales less natural. Together, these two question formats give you both a measurable score and a directional read on how employees are feeling, without requiring a lengthy survey to get there.

To build out a full leadership trust pulse, consider adding questions like:

- On a scale of 1-10, how valued do you feel by your manager?

- On a scale of 1-5, how clearly has leadership communicated the organization’s priorities over the past two weeks?

- On a scale of 1-5, how confident are you that leadership is making decisions in the best interest of employees?

- Are the new policies helpful in improving your work experience? (Emoji scale: very dissatisfied to very satisfied)

- What is one thing leadership could do differently to better support your team right now? (Open text)

For a deeper look at building surveys that generate reliable, actionable data, read How to Create an Effective Pulse Survey: 7 Key Steps.

2. Anonymous feedback channels for honest responses when trust is low

The single biggest predictor of honest feedback is whether employees genuinely believe there are no consequences for giving it. When trust in leadership is low, when a team has been through layoffs, or when the organization is navigating a sensitive culture issue, even well-designed surveys will produce sanitized responses if employees have any doubt about who can see what.

How to collect anonymous employee feedback effectively starts with one rule: anonymity cannot just be implied. It has to be stated explicitly, in plain language, every single time you ask. Here is language you can use directly in your survey communications: “Your responses are completely anonymous. Individual answers are never visible to your manager or HR. Results are only reported at the group level, and only when a minimum of five people have responded, so no single response can ever be traced back to you.”

The minimum group threshold detail matters more than most communicators realize. Employees in small teams are often acutely aware that even “anonymous” data can be reverse-engineered if only three people responded and two of them are known to be happy. Stating the threshold explicitly removes that concern before it becomes a reason not to respond.

Beyond pulse surveys, anonymous employee feedback channels worth having in place include a digital suggestion box that stays open year-round rather than only during formal survey cycles, and occasional third-party moderated listening sessions for topics too sensitive to run internally, such as DEI concerns, leadership behaviour, or psychological safety issues. The third-party element matters because employees are significantly more likely to speak candidly when the facilitator has no organizational stake in the outcome.

3. Real-time employee feedback for frontline and distributed teams

Most employee feedback collection systems are designed around desk-based workers. A survey lands in an inbox, gets opened between meetings, and gets submitted before the end of the day. That workflow excludes a significant portion of most workforces: the warehouse teams, healthcare workers, retail staff, and frontline employees who do not have a company email address open in front of them for eight hours a day.

Real-time employee feedback tools close that gap by meeting employees where they actually are, at the end of a shift, immediately after a training session, or right after a major operational change gets communicated on the floor. In healthcare environments specifically, where staff are moving between patients, wards, and shifts with no predictable window to sit down with a survey, real-time micro-pulses sent immediately after a shift are often the only format that generates reliable response rates. The same applies during organizational change rollouts, where waiting days or weeks for feedback means the moment has already passed and the data is less useful than it would have been in real time.

The three formats that work best in frontline environments are each designed around a single principle: zero friction.

- Micro-pulses after shifts or events. One or two questions sent immediately after a shift or company update, while the experience is still fresh. Responses collected in this window are significantly more accurate than surveys sent 48 hours later when the details have faded. Keep it to a single rating scale question and one optional open-text field.

- QR code check-ins. A QR code posted in a breakroom, locker area, or near a time clock lets employees respond on their own phone in under a minute without needing an app download or login. Works well for recurring sentiment checks and post-announcement feedback in locations where digital access is limited.

- Single-question kiosk prompts. A prompted question on a shared screen at the end of a shift captures sentiment data at scale without requiring any individual to identify themselves. Best used for simple directional questions where you need a quick read across a large frontline population.

The question design across all three formats follows the same rule: it has to be answerable in the time it takes to walk from one place to another. One question, a simple response format such as a star rating, emoji scale, or yes and no, and an immediate confirmation that the response was received. Anything more complex than that and completion rates will drop sharply.

For internal communications teams managing change rollouts or new policy implementations across distributed locations, real-time check-ins also serve a different purpose beyond engagement measurement. They function as an early warning system. If sentiment drops sharply at one location the day after a new policy goes live, that signal is far more useful when you receive it in real time than when it surfaces in a quarterly report three months later.

4. SMS and text message feedback for fast, accessible responses

Email works well for desk-based employees. For everyone else, the open rate tells a different story. According to Forbes, SMS messages have an open rate that consistently sits above 90%, compared to the industry average for internal emails, which typically lands somewhere between 30 and 50%. For frontline workers, distributed teams, and healthcare staff who spend most of their day away from a screen, SMS employee feedback collection is often the only channel that reliably reaches them at all.

The use cases where text-based feedback consistently outperforms other channels include shift-based workforces where email access is limited, healthcare environments where response windows are narrow and unpredictable, distributed teams spread across multiple time zones, and urgent change rollouts where you need a read on employee sentiment within hours rather than days.

A practical SMS feedback playbook:

Getting SMS feedback right comes down to four things: how you get people in, how often you contact them, how you write the questions, and how you stay on the right side of basic compliance expectations.

- Opt-in. Employees should always explicitly opt in to receiving feedback requests by text. The clearest way to do this is during onboarding, with a plain-language explanation of what they will receive, how often, and how to stop at any time. Consent should be documented, and opting out should be as simple as replying with a single word. Never add employees to an SMS feedback list without their knowledge.

- Frequency caps. Text feels more personal than email, which means over-sending has a faster and more damaging effect on trust. A reasonable ceiling for most organizations is two to four messages per month. If you are running a specific change rollout or event-based check-in, communicate upfront that frequency will temporarily increase and for how long.

- Question design. The format has to match the medium. A five-question survey is appropriate for email. For SMS, one question per message is the standard, with a response format that requires minimal effort such as a number from one to five, a yes or no, or a single word. The question itself should be written at a conversational reading level, specific enough to be actionable, and short enough to read in a single glance. “How clear was the communication you received about the shift change this week? Reply 1 (not clear) to 5 (very clear)” is a good model.

For teams using ContactMonkey, SMS feedback can be coordinated alongside email-based pulse surveys so that desk-based and frontline employees are captured in the same feedback cycle, with results consolidated in a single dashboard rather than tracked separately across different platforms.

5. Focus groups and listening sessions for the context data can’t capture

Focus groups and listening sessions are moderated small-group conversations designed to surface the qualitative context behind quantitative survey results. Survey data tells you what employees are feeling, but focus groups and listening sessions tell you why. When a pulse survey comes back with a low leadership trust score, and you have no idea what is driving it, or when engagement has been declining for two quarters, and the open-text comments are not pointing clearly in any direction, a structured listening session is often the fastest way to get to the real issue.

There is a behavioural science principle at work here called the social desirability bias, where people tend to give answers they believe are expected or safe rather than honest ones, especially in written formats where responses feel more permanent. A well-facilitated conversation with the right conditions reduces that bias significantly, which is why the qualitative data from a listening session often reveals what months of survey data has been obscuring.

When to use a listening session over a survey:

The clearest signal that a focus group is the right move is when your quantitative data is ambiguous. Run one when you see any of the following:

- A score drop without an obvious cause in your pulse or engagement data

- Consistently low ratings in a specific team or location with no clear pattern in open-text comments

- The same topic surfacing repeatedly in comments but without enough detail to act on

- A sensitive topic such as psychological safety, leadership behavior, or a culture concern that a survey will not capture honestly

- A major change on the horizon where you want to understand employee concerns before communications go out, not after

How do you run a focus group without introducing bias?

The facilitator is the most important variable. An internal HR representative running a session about senior leadership behaviour creates an obvious conflict of interest that most employees will immediately sense, even if nobody says it out loud. For sensitive topics, an external facilitator or someone from a part of the organization with no stake in the outcome will consistently produce more candid responses.

The session itself should be structured around prepared prompts rather than open-ended conversation, which tends to drift and makes synthesis difficult. Good prompts are specific, neutral in framing, and designed to draw out concrete experiences rather than general opinions. “Can you walk me through a recent situation where you felt communication from leadership was unclear?” will produce more actionable responses than “What do you think about how leadership communicates?”

Keep the group size between five and eight people. Smaller than that, and participants often feel too visible to be fully candid. Larger than that, and the conversation gets dominated by a few voices while others disengage.

Making sense of what came out of the room

The output of a listening session is only as useful as the process used to analyze it. After each session, the facilitator should produce a structured summary organized around themes rather than a transcript of what was said. Attribute nothing to individuals, even in internal documents. Group similar comments together, note how frequently each theme came up, and flag any themes that appeared across multiple sessions as those are the ones most likely to represent a systemic issue rather than an individual experience. That synthesis document becomes the bridge between the qualitative conversation and the quantitative survey data, and when the two are pointing in the same direction, you have something genuinely actionable to bring to leadership.

6. Manager conversations as a continuous source of honest feedback

Surveys capture what employees are willing to say to an organization. One-on-one conversations capture what they are willing to say to a person they trust. For internal communications and HR teams, that distinction matters because the most actionable feedback, the kind that reveals what is actually happening inside a team, almost never surfaces in a structured survey. It comes out in a conversation with a manager who has built enough trust to ask the right questions and listen without defensiveness.

The challenge is that most managers were never taught how to run a feedback conversation well. They either treat 1:1s as status updates, moving through project lists without ever asking how the employee is actually experiencing their work, or they ask questions so broad that they produce equally broad answers that are impossible to act on. “How is everything going?” will almost always produce “Fine.” The question design matters as much in a conversation as it does in a survey.

It’s worth knowing about skip-level meetings if you have not come across them before. They are simply a structured conversation where a senior leader sits down directly with employees who do not report to them, bypassing the usual management layer entirely. Most employees never get direct airtime with senior leadership, which means the concerns, ideas, and frustrations that never make it past a middle manager stay invisible at the top. Skip-levels fix that by giving employees a channel to share what they might not feel comfortable saying to their direct manager, and they give senior leaders an unfiltered read on what is actually happening on the ground.

A 6-question manager conversation guide:

These questions are designed to surface honest, specific feedback in a 1:1 setting without making the conversation feel like a formal review or an interrogation. Managers do not need to ask all six in a single session. Two or three, asked consistently over time, will produce more useful information than six asked once and never revisited.

- What part of your work has felt most frustrating or unclear over the past few weeks?

- Is there anything I could be doing differently that would make your job easier or more manageable?

- How well do you feel you understand where the team is headed and why decisions are being made the way they are?

- Is there anything you have been hesitant to bring up that you think I should know about?

- What is one thing about how we work together as a team that you think we should change?

- What would make you feel more supported or recognized in your role right now?

Building a manager feedback toolkit that keeps conversations confidential

The reason most 1:1 feedback never makes it to the people who could act on it is straightforward. Managers do not have a system for capturing and escalating themes without attributing them to specific individuals. Without that system, valuable signals either get lost entirely or get shared in ways that make employees less likely to be candid in future conversations.

Here is a lightweight toolkit that internal communicators can give managers today:

| What to do | How to do it | Why it matters |

| Log themes after every 1:1 | Note the general topic, the category it falls into (workload, communication, tools, culture), and whether it has come up before. No names, no direct quotes. | Patterns only become visible when they are written down. Memory is selective and unreliable across a full team. |

| Talk about what, not who | When the same concern comes up across three or more conversations, bring the pattern to HR or leadership rather than the individual comment. “Several people have raised concerns about workload clarity this month” is enough. | Employees stay candid when they trust their manager is not reporting back verbatim. |

| Agree on when to flag something | Decide in advance that any theme showing up in more than a third of conversations in a single month gets shared upward. This removes the guesswork around when something is worth raising. | Managers are more consistent when there is a clear, simple rule to follow rather than a judgment call every time. |

| Use shared category labels | When all managers tag themes using the same language, the data becomes comparable across teams. You can quickly see whether a concern is isolated or widespread. | One team flagging burnout looks like a team problem. Five teams flagging it looks like an organizational one. |

| Tell managers when their input led to something | When a pattern gets raised and action gets taken, close the loop with the manager who flagged it. | Managers who see their input making a difference become far more consistent about tracking and sharing it. |

7. Collecting 360 feedback that people actually trust

In most organizations, performance conversations only go in one direction. A manager tells an employee how they are doing, and that is usually where it ends. 360 degree employee feedback changes that by gathering input from everyone who works closely with that person, peers, direct reports, and cross-functional collaborators, not just the person above them.What you get back is often more honest, more complete, and more useful for development than anything a single manager review can surface on its own. According to Gallup’s The Human-Centered Workplace report, employees who receive meaningful feedback are nearly four times more likely to be engaged at work, and a well-run 360 employee feedback process is one of the most direct ways to deliver that kind of input at scale.

360 feedback delivers real value under a specific set of conditions:

- The purpose is development, not performance evaluation or compensation decisions. The moment it gets tied to pay or promotion, respondents become strategically cautious and the data loses its honesty almost immediately.

- Participants have been given guidance on how to give useful feedback before they fill out the form. Without that, most people default to vague ratings that tell the recipient nothing actionable.

- Results are shared with the individual in a supported setting, with a manager or coach present to help them interpret what they are reading and identify one or two concrete next steps.

- It is run no more than once a year for most roles. More frequent than that and it starts to feel less like development and more like surveillance.

When does 360 feedback backfire? The most predictable failure mode is tying 360 results to compensation or promotion decisions, which immediately changes how honestly people respond. Sending the form to too many people compounds the problem, as five to eight respondents is the right range for most roles and anything beyond that produces diminishing returns. Skipping the debrief is another common mistake. A 360 report landing in someone’s inbox without context or support is one of the fastest ways to create defensiveness rather than growth. And running it for junior employees who have limited peer and direct report relationships will produce data too thin to be meaningful.

Example feedback categories: A good 360 form keeps its focus narrow, covering a small set of behavioral categories rather than trying to evaluate everything at once. Peer and direct report responses should always be aggregated in the final report so no individual response can ever be traced back to a specific person.

| Category | Example question |

| Communication and clarity | Does this person communicate decisions and updates in a way that is clear and timely? |

| Collaboration | Does this person make it easier or harder for the people around them to do their best work? |

| Reliability | When this person commits to something, does it get done? |

| Leadership and direction | Does this person give their team a clear sense of priorities and purpose? |

| Openness to feedback | Does this person respond to input and different perspectives with curiosity rather than defensiveness? |

8. Always-on digital feedback channels that run in the background

Always-on digital feedback channels are continuously running touchpoints (eg. embedded widgets, chatbots, and AI-assisted tools) that collect employee feedback between formal survey cycles without requiring manual triggering. Most automated employee feedback collection happens in bursts. A survey goes out, a window closes, and then nothing for weeks or months until the next one. Always-on digital channels fill that gap by creating low-friction touchpoints that run continuously in the background, without requiring an internal communications team to manually trigger anything.

According to ContactMonkey’s GSIC 2026 report, 57% of internal communicators are prioritizing AI in the workplace as a top focus area this year, and the shift toward always-on digital feedback channels is a direct reflection of that. The organizations moving fastest on this are the ones treating feedback infrastructure the same way they treat their communications infrastructure: something that should be running all the time, not just when someone remembers to send a survey. The three formats driving this shift right now are worth understanding in detail:

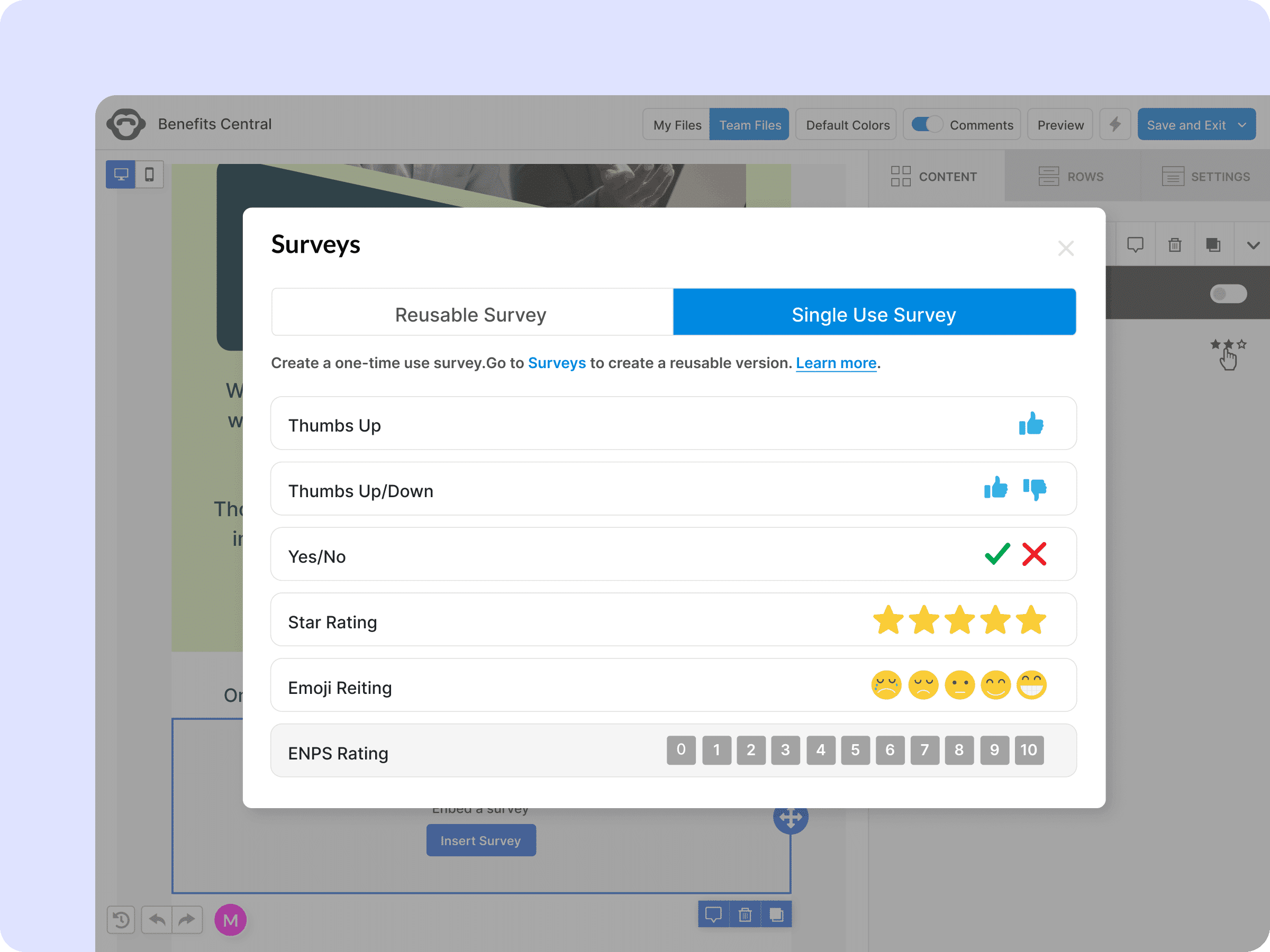

1. In-app micro-feedback widgets: A small prompt embedded directly inside an internal tool, an intranet, a digital newsletter, or an employee portal that asks a single question at a relevant moment. An employee finishes reading a company update and sees “Was this helpful?” with a quick star rating or thumbs up or down. Someone completes an onboarding module and gets a one-question pulse before moving on. For internal communications teams looking for new tools to collect employee feedback, embedded micro-feedback widgets are one of the most practical options available right now.

- A practical example: an internal communications team sends a newsletter announcing a new hybrid work policy. Rather than waiting for a follow-up survey, they embed a single emoji reaction at the bottom of the email asking “How do you feel about this change?” Results come back within hours, segmented by department, and the team can see that one location is responding significantly more negatively than others before a single manager has flagged it. The feedback is collected in context, which makes it significantly more accurate than a survey sent hours or days after the fact.

2. Chatbots for quick check-ins: Conversational check-ins delivered through tools employees already use daily, whether that is an internal messaging platform or a mobile app, can surface feedback that a formal survey never would. The conversational format feels less clinical than a form, which tends to produce more candid and specific responses. In 2026, with most organizations operating in hybrid environments where employees are distributed across locations and time zones, chatbot check-ins are particularly valuable for reaching people who would otherwise fall through the gaps of a traditional survey cadence. They work well for onboarding feedback, post-training sentiment, and manager check-ins where speed matters more than depth.

3. AI-assisted comment theme clustering: Open-text responses are where the most honest employee feedback lives, and they are also the hardest to analyze at scale. AI-assisted theme clustering reads across hundreds of open-text comments and groups them by topic, so internal communications teams can see in minutes what would otherwise take days of manual work. The governance piece matters here: any AI-assisted analysis should have a human review layer before findings get shared with leadership, and employees should know upfront that comments may be analyzed using automated tools. Transparency about how AI is being used in your feedback process is becoming a baseline expectation in 2026, not an optional disclosure.

For teams using ContactMonkey, these capabilities sit alongside email-based pulse surveys in a single platform, so feedback collected across multiple digital touchpoints feeds into the same dashboard rather than living in separate tools that nobody has time to reconcile. For a deeper look at how AI is reshaping internal communications more broadly, read AI and the Future of Communication: What’s Actually Changing and What Isn’t.

Best Practices For Collecting Employee Feedback

The method matters, but so does how you run it. Even the best-designed feedback program will see participation drop if employees feel surveyed rather than heard. These are the practices that make the difference between a feedback culture and a feedback exercise.

- Ask fewer questions, more often → A three-question pulse sent monthly will consistently outperform a 25-question survey sent once a year. Shorter surveys respect employee time, produce more honest responses, and give you data you can actually act on before the moment passes.

- Rotate topics rather than repeating the same questions → Asking the same five questions every cycle trains employees to pattern-match their answers. Varying the theme keeps responses genuine and signals that the organization is listening to different things at different times.

- Make it easy and honest to respond → Neutral question design and accessibility are two sides of the same coin. Leading questions produce skewed data that feels validating but is useless for decision-making, so write questions that describe the experience rather than assume it. At the same time, mobile-friendly formats, short completion times, and where relevant, multilingual options ensure that participation is not inadvertently limited to employees who sit at a desk and speak English as a first language.

- Show proof of action before the next survey goes out → The single biggest driver of participation is evidence that the last round of feedback changed something. A “you said, we did” update before launching a new survey cycle is what keeps the next response rate from dropping.

7 ways to get honest feedback from employee surveys

Check our our library of helpful sample emails and internal comms templates today.

Download tips

How to Close the Employee Feedback Loop in 14 Days

Results come in, themes get discussed, and then the moment passes. Two weeks later, nothing has visibly changed and employees have already started to notice. This 14-day framework is designed to prevent exactly that.

| Timeframe | Action | What good looks like |

| Days 1 to 3 | Summarize and prioritize | Produce a plain-language summary organized by theme, not by question. Identify one or two priorities that are high-impact, within your control, and specific enough to have a named owner and a realistic deadline. Trying to act on five things at once is how action plans become shelf documents. |

| Days 4 to 7 | Publish what you heard | Send a brief communication to employees summarizing the key themes in honest, plain language. Keep it short and avoid over-qualifying. Use this as a starting point: “In our last survey, you told us [theme 1] and [theme 2] were affecting your experience most. Here is what we are doing about it, and by when.” |

| Days 8 to 14 | Deliver visible change and communicate it | The action does not have to be a large structural change. A policy clarification, a process adjustment, or a commitment to investigate something further with a specific timeline attached is enough. What matters is that it is visible, specific, and communicated back through the same channels used to collect the feedback. |

Close the loop with a “you said, we did” update

According to GSIC 2026, only 15% of organizations consistently communicate what changed after a feedback cycle. This table shows what that communication looks like in practice.

| You said | We did |

| Communication from leadership during the restructure felt unclear and inconsistent | We are introducing a fortnightly leadership update every other Monday starting next week |

| Workload expectations after the team restructure were not clearly communicated | Each team lead will publish a revised priorities document by end of this week |

| Onboarding support for new starters in the first 30 days felt insufficient | We have added a structured 30-day check-in with HR to every new hire’s first month |

What Does Good Employee Feedback Software Look Like in 2026?

Running a feedback program manually is possible, but it does not scale. Copying survey responses into spreadsheets, chasing non-responders one by one, trying to spot themes across hundreds of open-text comments, and then drafting a follow-up communication to close the loop adds up quickly. ContactMonkey’s GSIC 2026 report found that 78% of internal communicators say creating and sending content takes up most of their time, leaving very little capacity for the analysis and follow-through that actually makes a feedback program worth running.

In 2026, the most effective internal communications teams are working with better infrastructure rather than longer hours. Employee feedback software exists to solve exactly that problem. It handles distribution, reminders, response tracking, and analysis automatically so teams can focus on acting on what they hear rather than managing the mechanics of collecting it. When evaluating an employee feedback platform, these are the capabilities you should look for:

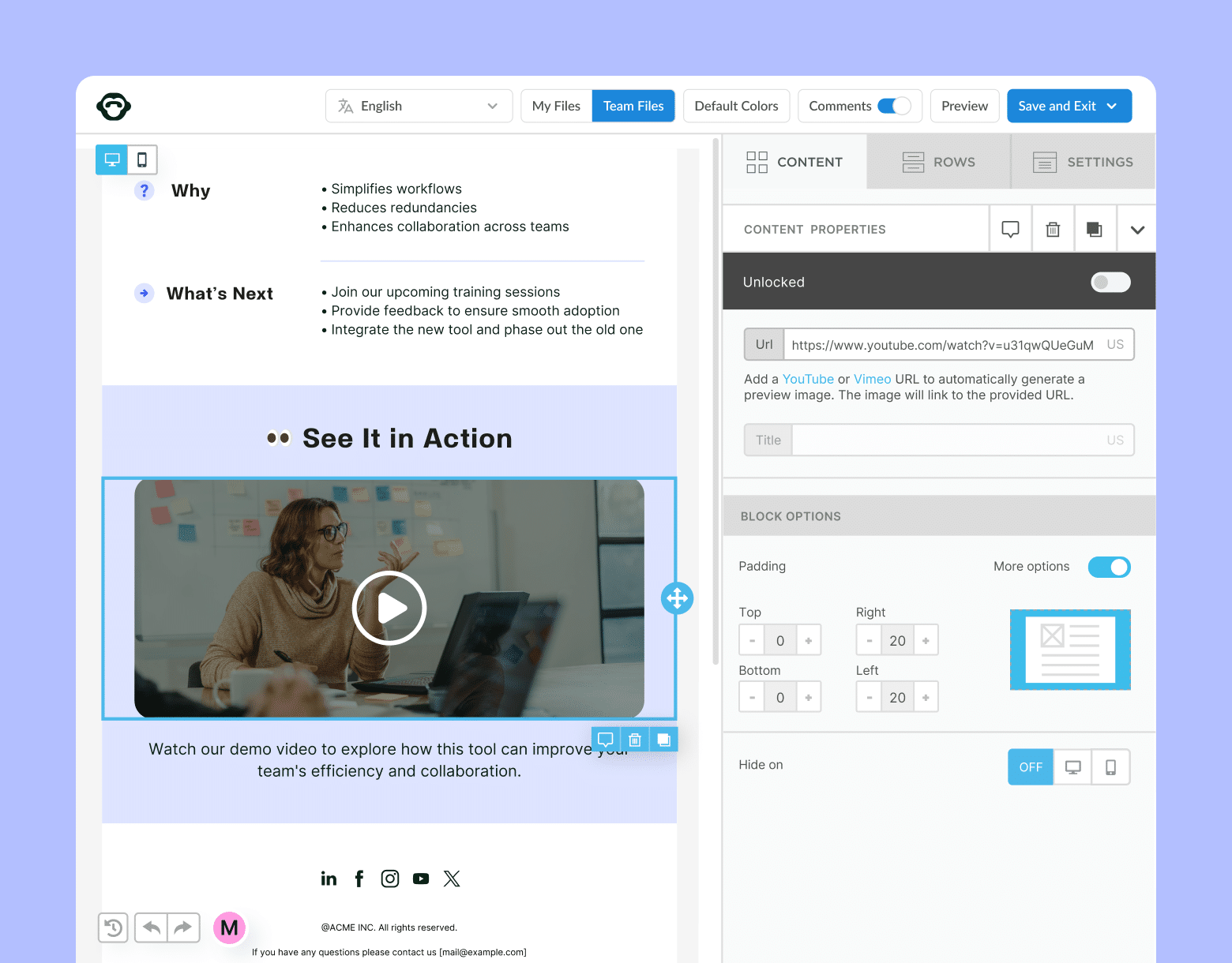

One-click feedback embedded in every communication

Most survey tools require employees to click a link, open a new tab, log in, and complete a form before submitting a single response. Every one of those steps is a reason to abandon it. ContactMonkey embeds pulse surveys, emoji reactions, star ratings, eNPS scores, polls, and open comment boxes directly inside internal emails and newsletters so employees respond in a single click without ever leaving their inbox. The result is higher response rates and feedback collected in the same moment employees are engaging with your content.

Multi-channel feedback collection that reaches every employee

A single channel will never reach your entire workforce. The right employee feedback system should collect responses through email, SMS, Microsoft Teams, and SharePoint so desk-based and frontline employees are captured in the same feedback cycle rather than tracked separately. ContactMonkey distributes surveys across all of these channels from a single platform, so your internal communications team is not managing multiple tools to reach different audiences.

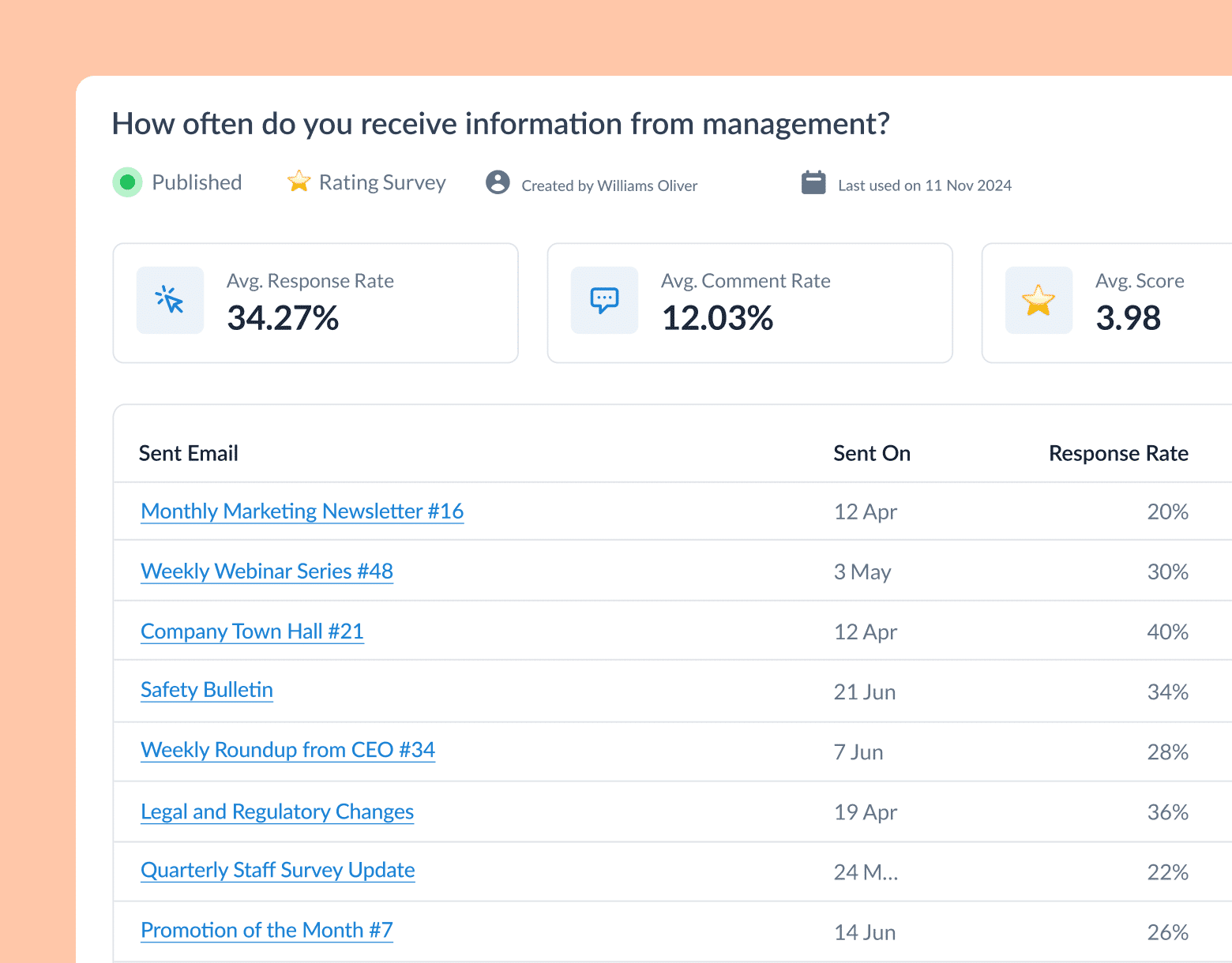

Real-time dashboards and feedback analytics that show what’s working

Raw response data is not useful on its own. Look for a platform that surfaces participation rates, sentiment trends, segment-level breakdowns by department or location, and open-text theme analysis in a format that can be shared directly with leadership. ContactMonkey’s real-time dashboard does exactly that, with leadership-ready reports that show movement over time rather than just a point-in-time score, which is what turns feedback data into a credible business case.

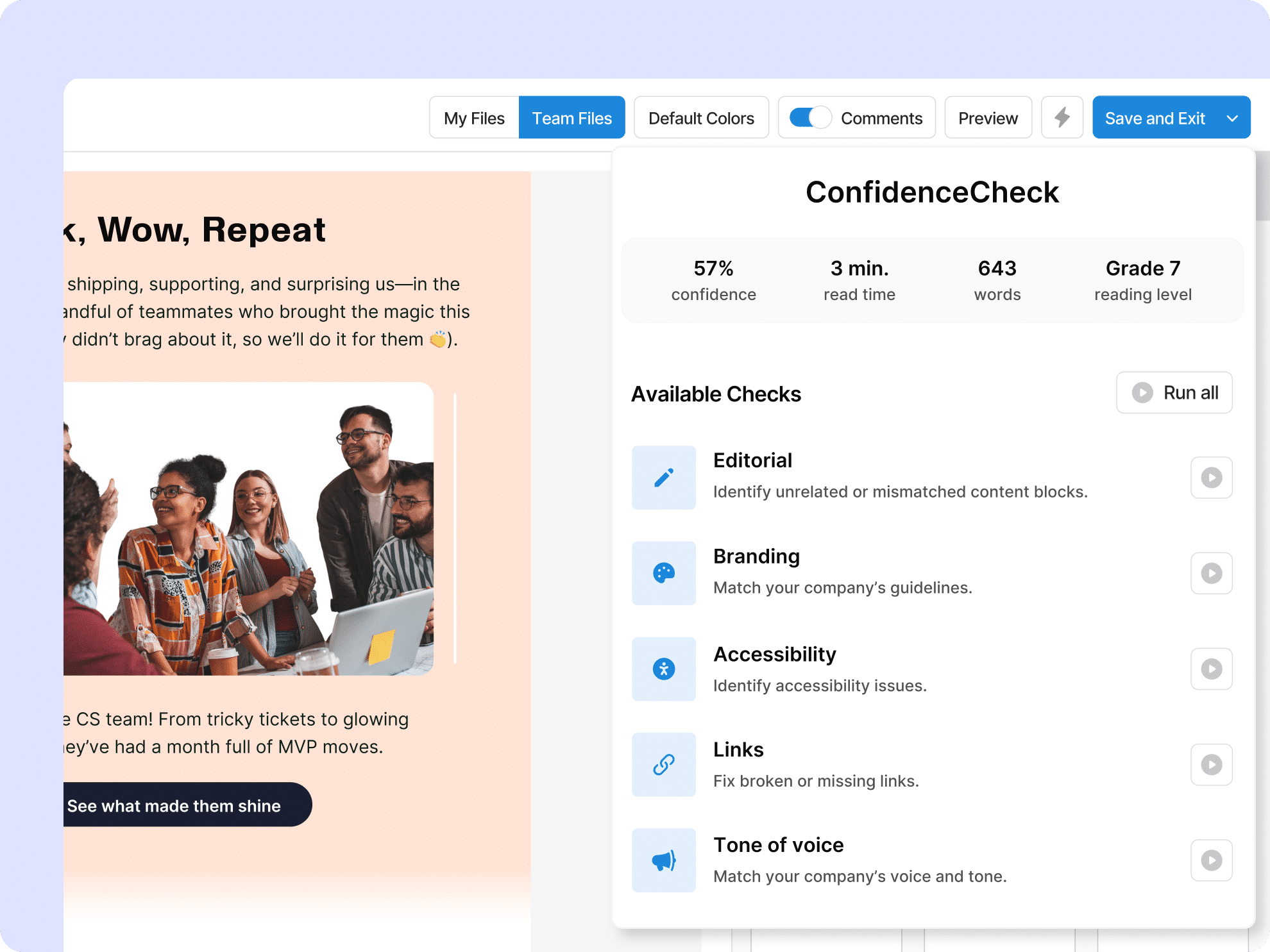

AI that improves your feedback comms before they reach employees

A good employee feedback platform should have AI built into the places where internal communications teams actually lose time. ContactMonkey’s AI email builder generates survey content and email copy directly inside the builder, so teams spend less time staring at a blank template and more time refining the message. ConfidenceCheck reviews communications before they go out and flags potential clarity issues, tone problems, or missing context, which reduces the risk of sending something that creates more confusion than it resolves. For small internal communications teams managing high-volume send schedules, this is one of the most practical time-saving features available right now.

Dynamic content that makes feedback feel relevant

Generic, one-size-fits-all survey sends are one of the quietest drivers of low participation. When an employee in a warehouse receives the same feedback request as someone in a corporate head office, neither feels particularly spoken to. ContactMonkey’s dynamic content feature lets internal communications teams build a single email with multiple content blocks, each tailored to a different audience segment, without duplicating or manually recreating the message for each group. A question relevant to managers can appear only for managers. Location-specific feedback prompts show up only for the relevant office. The result is feedback requests that feel targeted and relevant, which meaningfully improve both open rates and response quality.

Audience segmentation that sends the right survey to the right people

Feedback is only as useful as it is specific. Sending a pulse survey to your entire organization when you only need to hear from one department produces noisy data that is harder to act on. ContactMonkey’s audience segmentation lets internal communications teams group employees by department, role, location, seniority, or any custom attribute, and then target surveys precisely to the group whose feedback actually matters for that particular question. Results can then be analyzed by segment in the same dashboard, so you can see not just how the organization feels overall but where specific teams, locations, or roles are diverging from the broader picture.

Start Building a Feedback Loop That Actually Closes With ContactMonkey

Employee disengagement is not a new problem, but the conditions driving it in 2026 are more complex than they have ever been. Employees are navigating a world that has made trust harder to extend and skepticism easier to justify, and they are bringing that context to work with them every day. The organizations that will close the culture gap are not necessarily the ones with the biggest budgets or the most sophisticated HR programs. They are the ones who have built a genuine, repeatable system for hearing from their people and doing something visible with what they hear.

The methods, internal email templates, and frameworks in this guide are a starting point. But the difference between a feedback program that sustains participation over time and one that quietly loses it comes down to infrastructure: the right channels, the right tools, and a process that makes closing the loop as consistent as opening the survey. That is what ContactMonkey is built for.

Book a demo today to see how ContactMonkey helps internal communications teams build a feedback culture that employees actually believe in.